Adversarial Text to Continuous Image Generation

Kilichbek Haydarov, Aashiq Muhamed, Xiaoqian Shen, Jovana Lazarevic, Ivan Skorokhodov, Chamuditha Jayanga Galappaththige, Mohamed ElhoseinyCVPR 24

Abstract

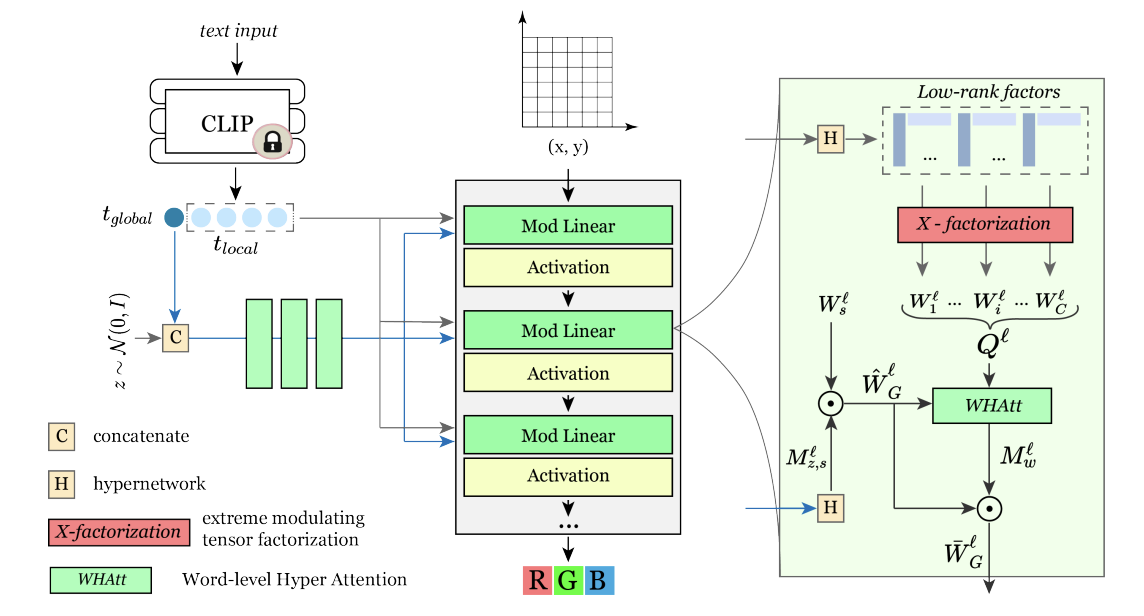

Existing GAN-based text-to-image models treat images as 2D pixel arrays. In this paper we approach the text-to-image task from a different perspective where a 2D image is represented as an implicit neural representation (INR). We show that straightforward conditioning of the unconditional INR-based GAN method on text inputs is not enough to achieve good performance. We propose a word-level attention-based weight modulation operator that controls the generation process of INR-GAN based on hypernetworks. Our experiments on benchmark datasets show that HyperCGAN achieves competitive performance to existing pixel-based methods and retains the properties of continuous generative models.

Paper

paper paper

Code

Web Page:HyperCGAN

Citation

@InProceedings{Haydarov_2024_CVPR,

author = {Haydarov, Kilichbek and Muhamed, Aashiq and Shen, Xiaoqian and Lazarevic, Jovana and Skorokhodov, Ivan and Galappaththige, Chamuditha Jayanga and Elhoseiny, Mohamed},

title = {Adversarial Text to Continuous Image Generation},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2024},

pages = {6316-6326}

}